A Framework for Evaluating AI Tools Before Adoption in 2026

Most teams evaluate AI tools based on features. The right question is whether the tool fits the workflow.

Why Tool Selection Usually Fails

The most common mistake in AI adoption is not choosing the wrong tool. It’s choosing a tool before understanding the process it is supposed to improve.

Teams see a demo, read a feature list, and map the tool to a vague goal (“we need to be more efficient”). Tools improve steps, not goals. The result is sporadic use and quiet abandonment within a quarter.

Feature lists tell you what a tool can do in theory. They say nothing about whether it maps to anything your team actually does.

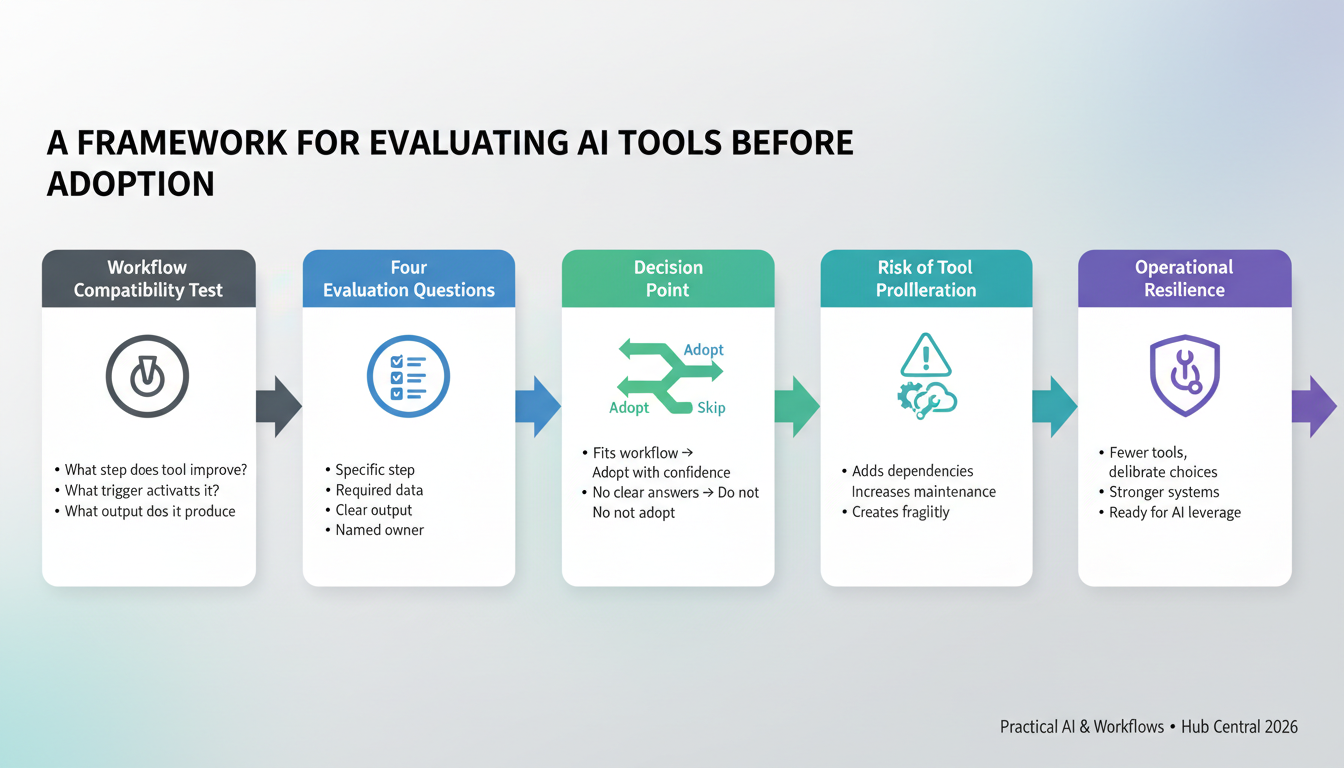

The Workflow Compatibility Test

Before evaluating any AI tool, run it through these three diagnostic questions:

- What step does the tool improve? Name the exact action in your defined sequence (not “research” or “writing”).

- What trigger activates it? What event or condition causes someone to reach for this tool?

- What output does it produce? Where does that result go next in the workflow?

If you cannot answer all three clearly, the evaluation is premature. The problem is not the tool — the workflow has not been mapped yet.

See also: What Is a Workflow? Definition, Examples, and Why Workflows Matter

The Four Evaluation Questions

Once a workflow step exists for the tool to improve, ask these four questions:

- What step does this tool improve? Be specific enough that you could remove the tool and know exactly what would suffer.

- What data does it require? Are the inputs available, structured, and accessible in your current environment?

- What output does it produce? Is the deliverable clear and does it connect directly to the next step?

- Who owns the result? Every output needs a named person responsible for reviewing or acting on it.

The narrower the answers, the better the fit.

See also: When Should You Introduce AI Into a Workflow?

The Risk of Tool Proliferation

Each new tool adds dependencies, maintenance, onboarding, and future decisions. One or two well-chosen tools add capability. Ten loosely chosen tools add fragility.

Use the framework above as a filter. If a tool cannot answer all four questions with specificity, it is not ready for adoption.

See also: AI Integration Checklist for Small Teams

Thinking Prompt

Does this tool improve a specific step, or are we hoping it improves everything?

Next in Series: Part 6: Best AI Writing Tools for Small Teams in 2026

Keep Reading

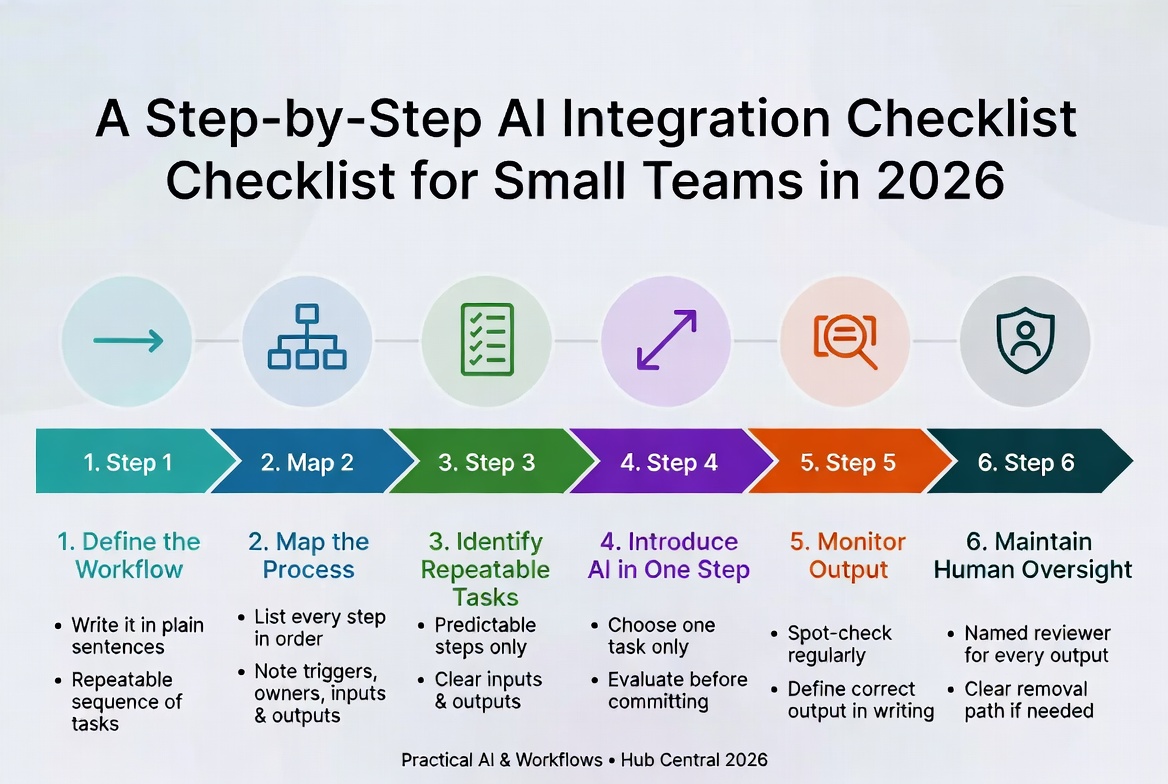

A Step-by-Step AI Integration Checklist for Small Teams in 2026

A disciplined six-step framework for adopting AI without losing control. Define the workflow first, map it, then introduce AI one step at a time – so you actually trust the results.

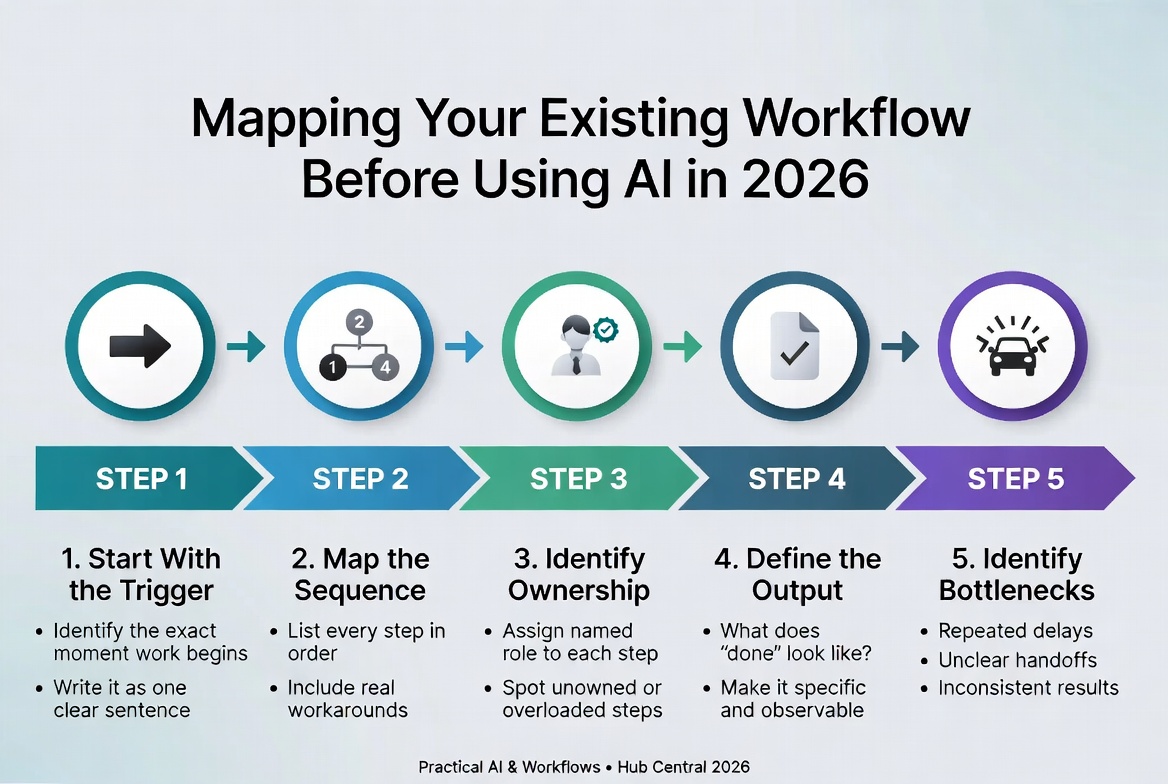

Mapping Your Existing Workflow Before Using AI in 2026

Most teams rush into AI tools without first mapping their current workflow. This simple, no-software method gives you full visibility so you improve the right steps instead of automating confusion.

About Okel Dijital Team

Written by the Hub Central editorial team. We test real AI workflows and WordPress processes to help small teams work faster and smarter.